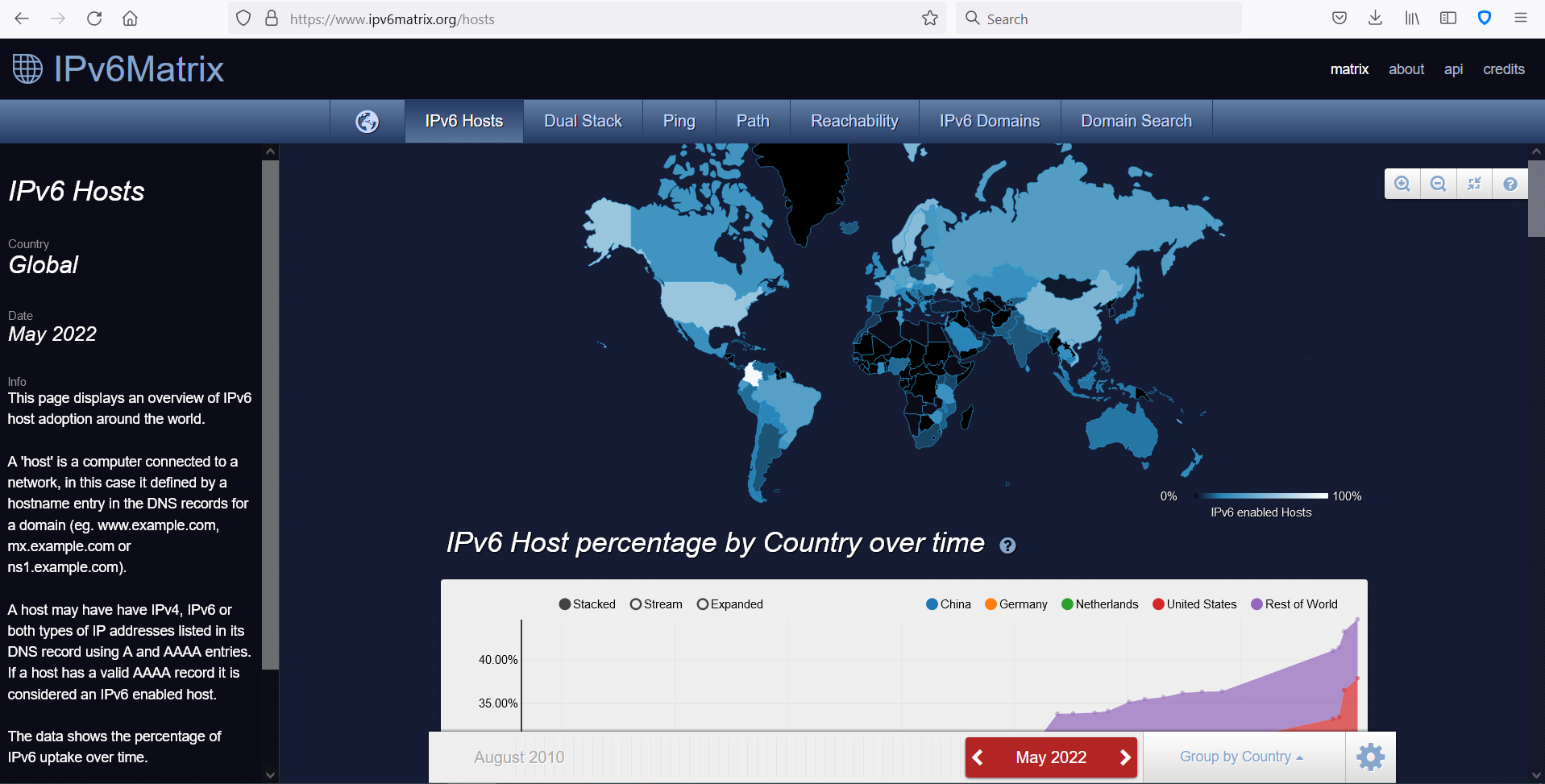

The IPv6 Matrix has been measuring the use of IPv6 in the world’s 1 Million most popular Web Sites since 2010. The project has collected over 500Gb of data relating to the spread of IPv6 worldwide. Its two servers (one crawler and one web server) were now 14 years old when, in 2020, it was time to replace them with modern technology. At the December 2019 IPv6 Council Annual General Meeting, the Chapter received a pledge from UK IPv6 hosting company Mythic Beasts to host the project on virtual machines free of charge, provided the migration was undertaken by the Chapter.

A proposal was made in 2020 for Internet Society Foundation Funding. It explained the problem about the physical servers:

The trouble is that the two servers that the project runs on are now way past their useful life and risk breaking down, with the risk of losing the invaluable information amassed in 10 years of operation. A new sponsor has stepped forward to offer gratis virtual hosting. But the project needs to be transferred over to the new virtual environment and this involved some significant programming.

The application for funding for a grant request for a total of $24 700 was accepted and the work could proceed forward. However, on the first day of the preliminaries, the Web server crashed whilst two members of the Team were doing preliminary work surveying the Web server system. It was a serious crash whereas the server could not be restarted.

The next steps are best explained in the project’s formal interim report for the Internet Society Foundation: Internet Society Foundation IPv6 Matrix Grant Interim Report 1.

After over a year of delay caused by the consequences of the COVID19 pandemic, locking down of the University of Southampton campus and making physical access to the servers impossible, it became possible to decommission the two servers, put them in storage, and then to ship them to London for the information that they contained to be recovered manually. In a status update from the 9th March 2021, Olivier Crépin-Leblond wrote:

——– Forwarded Message ——–

With the servers physically leaving the University of Southampton, this closes an important chapter that was opened when the Group Design Project (GDP) was confirmed for three students to work under the supervision of Dr. Tim Chown. This was back on 25 September 2013. I delivered the two servers at the University of Southampton on 28 October 2013, to take residence in Building 53. They never stopped collecting data ever since, until their recent crash. Let’s hope we can revive them long enough to virtualise them.

——– End Forwarded Message ——–

The plan was to have the two servers looked at by a specialist in London and to evaluate whether the data inside the server’s disks was salvageable. If there was a Team to find out, it was the people that put the two servers together 10 years earlier. The folks at 2020Media therefore focussed on recovering the disks. Alan Barnett and Rex Wickham spent significant time to recover the disks manually – first transferring the raw disk data images to brand new disks and then rebuilding the images to make them bootable, based on the knowledge they had of the file structures etc.

After a couple of weeks, they had managed to extract 100% of the data, software, complete o/s environment of both servers and installed it on two temporary VM environments. It then became possible to proceed with the next stage of the project, which was to make a new home for the crawler and web server in a production level virtual environment, rewrite some of it, and re-launch in the future.

The Chapter hired a professional contractor to do the core work of (a) updating the Web server to a new environment and (b) re-write the Crawler from scratch so as to optimise it whilst keeping it absolutely in-line to be backward compatible with the previous Crawler.

James Lawrie from SilverMouse took on the tasks as listed in the worksheet:

| Main Objectives & Activities | Plan Start | Plan End | Person in Charge | Status | Comments | Optional | |

| List the Project Objectives and ALL related Activities | Indicate potential start date | Indicate potential end date | Indicate the name of the person in charge | Indicate task progress | Additional information | To adjust if needed | |

| Objective 1- Preliminaries | |||||||

| Search for contractor | 1-Aug-2020 | Olivier | Complete | Includes drafting a project brief, requesting offers | |||

| Hire Contractor and Brief them | Olivier | Complete | Includes conference calls with contractor and other parties | ||||

| Contractor designs migration plan | Contractor | Complete | |||||

| Contractor Sets Up VM Environment ready to receive servers | Contractor | Complete | Includes collaboration with Mythic Beasts | ||||

| Objective 2 – Migrate Web Server | |||||||

| Get Web Server Running with current version of Node | immediate | one week | Complete | Needs DNS update, already works on elephant.ipv6matrix.org | |||

| Contractor Packages and Migrates Web Server | one week | Contractor | Complete | Code is stored in git with deployment instructions | |||

| Contractor Automates maintenance tasks | TBC if necessary | Contractor | Complete | Mythic Beasts handling this | |||

| Contractor Automates Back-up tasks | TBC if necessary | Contractor | Complete | Mythic Beasts handling this | |||

| Contractor tests Web Server Implementation | TBC | Contractor | Complete | ||||

| Activity 5 | |||||||

| Activity 6 | |||||||

| Activity 7 | |||||||

| Objective 3 – Migrate Crawler | |||||||

| Contractor Packages and Migrates Crawler | one month | Contractor | Complete | Crawler was rewritten | |||

| Contractor Optimises Crawler in new environment | two weeks | Contractor | Complete | Now runs in 2-3 days | |||

| Contractor Automates maintenance tasks | TBC | Contractor | Complete | Mythic Beasts handle this | |||

| Contractor tests new Crawler Implementation | TBC | Contractor | Complete | ||||

| Activity 5 | |||||||

| Activity 6 | |||||||

| Activity 7 | |||||||

| Objective 4 – Packaging | |||||||

| Contractor Creates Packages for both servers | TBC | Contractor | Complete | Code is stored in git with deployment instructions | |||

| Contractor Tests Packages on another location | included above | Contractor | Complete | Tested on three different servers | |||

| Activity 3 | |||||||

| Activity 4 | |||||||

| Activity 5 | |||||||

| Activity 6 | |||||||

| Activity 7 |

The work was completed in late January 2022 and bugs fixed throughout the months of February. As part of the delivery three blog posts were written. These are referenced as follows.

– A blog post about the project itself – Monitoring the state of IPv6 deployment with The Internet Society:

https://silvermou.se/isoc-ipv6-crawler/

and

– A blog post about the latest run – The state of the Internet as of January 2022:

https://silvermou.se/the-state-of-the-internet-as-of-january-2022/

and

- A blog post questioning whether the Internet is edging towards a walled garden:

https://silvermou.se/is-the-web-becoming-a-walled-garden/

The Crawler will now run monthly. It takes less than a week for each run, which means more domain names can be added to the list, thus providing a fuller picture of IPv6 connectivity.

Looking forward to the future, the Chapter intends to define new projects that will follow-on from the IPv6 Matrix. As can be seen from the Blog posts, some data collected over the years can also help evaluate other Internet-wide, big-picture parameters, such as the extent of Internet technical consolidation (consolidation of Web servers, for example), the overall health of the DNS, or the geographical distribution of the Internet’s sources of information. These are projects which the Team at ISOC UK is studying for future reference.

If you have a project to suggest building on the IPv6 Matrix, please contact the Team at contact@isoc-e.org – we’d like to hear from you.